Certified AI Response Analyst

The JUDGE Framework

The Future Skill Is Not Asking AI Questions.

It’s Evaluating AI Answers. – Introducing the JUDGE Framework

AI tools can now generate reports, summarize research, write code, and analyze data in seconds.

Tasks that once required junior analysts, entry-level programmers, and research assistants can now be produced by AI.

But this does not eliminate the need for humans. Instead, it creates a new and increasingly important role inside organizations.

AI answers often sound confident, even when they are:

• partially incorrect

• missing important context

• biased toward certain viewpoints

• based on outdated information

Before AI output is used in a report, presentation, or decision, someone must answer one critical question:

Can this AI output be trusted?

That responsibility increasingly belongs to people who can evaluate AI responses critically.

A Skill Most AI Courses Don’t Teach

Most AI courses today focus on:

• prompt engineering

• generating content with AI

• automating tasks

Those skills help people produce AI output faster.

But they do not address a growing need inside organizations:

evaluating whether AI output should actually be trusted.

This course focuses on that missing skill.

Introducing the AI Output Evaluation Course

A practical program from Fifty Is Nifty designed to teach students and professionals how to analyze AI-generated responses before they influence decisions.

Participants learn how to:

✔ detect incorrect AI answers

✔ identify weak reasoning

✔ spot missing information

✔ recognize bias and ethical concerns

✔ guide AI toward better responses

In short, this course teaches how to move from using AI to supervising AI.

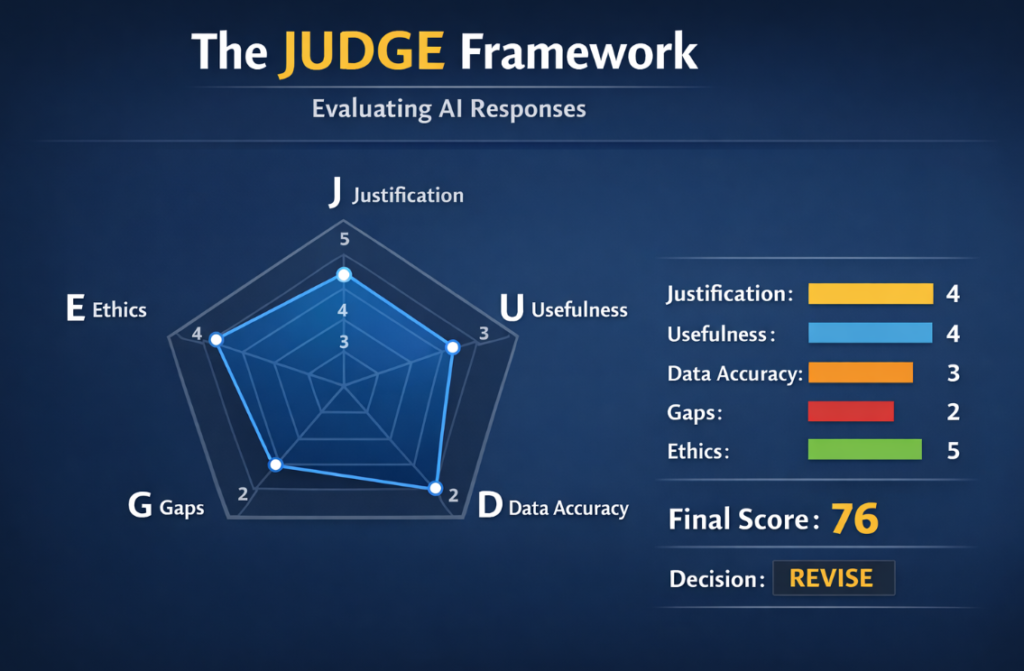

The JUDGE Framework

The course introduces a structured evaluation method called JUDGE.

Participants learn to analyze AI responses across five dimensions.

| JUDGE | What It Evaluates |

|---|---|

| J | Justification – Does the answer explain its reasoning? |

| U | Usefulness – Is the response helpful and relevant? |

| D | Data Accuracy – Are the facts correct? |

| G | Gaps – Is important information missing? |

| E | Ethics – Is the response responsible and unbiased? |

Using this framework, participants learn to determine whether an AI response should be:

Accept • Revise • Reject

What You Will Learn

Participants will learn how to:

• evaluate AI-generated responses using the JUDGE framework

• detect hallucinations and factual errors

• identify gaps in AI reasoning

• compare multiple AI outputs

• improve prompts to generate better responses

• understand ethical risks of AI systems

The course includes hands-on exercises analyzing real AI-generated responses.

Course Modules

The program includes the following modules:

1. AI and the Changing Workforce

How AI is reshaping entry-level work.

2. How AI Generates Answers

Understanding hallucinations and reasoning limitations.

3. The JUDGE Framework

A structured approach to evaluating AI output.

4. Detecting AI Errors

Identifying incorrect facts and missing context.

5. Improving AI Responses

Learning how better prompts lead to better answers.

6. Ethical AI Use

Recognizing bias and responsible AI deployment.

7. Certification Exam

Certification

Participants who successfully complete the course and pass the exam receive:

Certified AI Response Analyst (CAIRA)

Issued by Fifty Is Nifty

The certification demonstrates the ability to evaluate AI-generated responses and support better decision-making.

Who This Course Is For

This program is designed for:

Students

Preparing for careers where AI tools will be part of everyday work.

Professionals

Who want to move beyond simply using AI tools.

Managers and Analysts

Who must evaluate AI outputs before relying on them in decisions.

No programming background required.

The AI Era Is Not Only About Using AI

It is about knowing when AI is right — and when it is wrong.

Register Your Interest

Interested in learning this critical skill?

Join the interest list to receive updates about upcoming sessions of the course.