The JUDGE Framework: A Simple Way to Evaluate AI Responses

Artificial Intelligence can now generate answers to almost any question. Students use it for assignments, professionals use it for research, and businesses use it for data analysis.

But an important question remains:

If AI generates the answer, how do we know whether the answer is actually reliable?

AI systems sometimes produce responses that sound convincing but may contain incorrect facts, hidden assumptions, missing information, or bias.

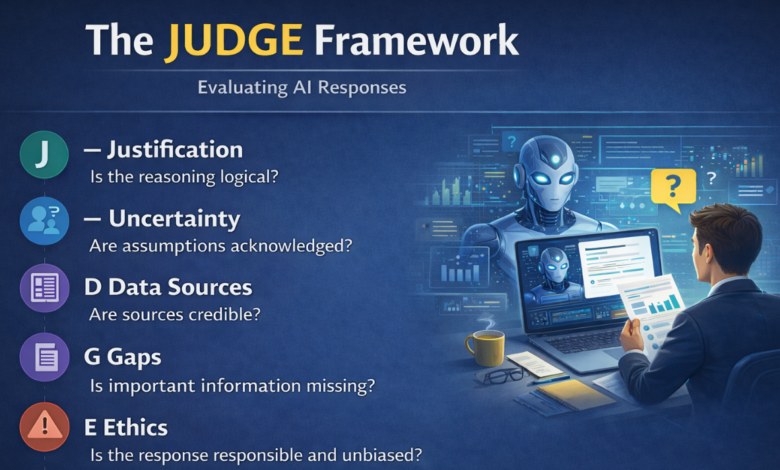

To help evaluate AI-generated responses more carefully, a simple approach called the JUDGE Framework can be used.

The JUDGE Framework

The JUDGE Framework provides a structured way to review AI-generated answers before relying on them.

What It Evaluates

J — Justification

Is the reasoning logical?

Does the response clearly explain how it reached its conclusion?

Or does it simply present an answer without supporting reasoning?

U — Uncertainty

Are assumptions acknowledged?

Does the response recognize uncertainty or limitations?

Or does it present speculation as if it were a confirmed fact?

D — Data Sources

Are the sources credible?

Are the facts based on reliable information, or could they be outdated, fabricated, or unsupported?

G — Gaps

Is important information missing?

Does the response overlook key context, perspectives, or conditions needed to fully understand the topic?

E — Ethics

Is the response responsible and unbiased?

Could the answer promote harmful advice, misleading conclusions, or biased perspectives?

Making the Final Decision

After reviewing an AI response using the JUDGE framework, three outcomes are possible:

✔ Accept – The response is reliable enough to use.

✏ Revise – The response has useful elements but needs correction or clarification.

✖ Reject – The response is inaccurate or potentially harmful.

Why Evaluating AI Responses Matters

AI is becoming an increasingly powerful tool, but it is not perfect. AI systems can sometimes:

- generate incorrect facts

- overlook important context

- express high confidence in uncertain information

- unintentionally reflect bias from training data

The ability to critically evaluate AI-generated content will become an essential skill for students, professionals, and decision-makers.

In the future, success will not depend only on using AI tools, but also on judging AI outputs wisely.

A Simple Question to Ask

The next time you receive an answer from an AI system, pause for a moment and ask:

Does this response pass the JUDGE test?

Developing this habit can help ensure that AI becomes a powerful assistant — not a source of misinformation.